5 Books a Working AI Engineer Actually Read for Real-World Accuracy Improvement and Product Development

This post is for engineers who are already building with AI but can't seem to improve accuracy. Every book here was chosen for practical, real-world knowledge — not tutorials.

If you're not yet comfortable with Python, I'd strongly recommend starting there first — though the concepts in these books are valuable even before you're fully fluent in it.

TL;DR

- Being able to call AI APIs isn't enough. Understanding model internals is what enables real performance improvement

- Every book here is published by O'Reilly — written by engineers actively working at HuggingFace, OpenAI, Anthropic, Google, and NVIDIA. These are the books that ML engineers in the West actually read

- Reading order is flexible — adjust based on your level and goals. The baseline path is: Deep Learning from Scratch → Transformers → Generative AI → AI Engineering. If you already know the basics, jump straight to Transformers

Why Understanding the Internals Directly Impacts Your Work

More and more engineers can ship AI-powered products. But "why isn't accuracy improving?", "should I fine-tune or not?", "how do I reduce hallucinations?" — these judgments are impossible without understanding what's happening inside the model.

Three concrete examples of how that understanding changes things:

① Understanding tokenizers changes how you read LLM behavior

LLMs don't process raw text. They split input into tokens first. Whether "Tokyo" becomes one token or two depends on the model, and non-English languages generally consume more tokens than English. Without knowing this, you'll never understand why costs spike on certain inputs or why the model produces strange output in edge cases.

② Fine-tuning: data quality matters more than hyperparameters

Obsessing over learning rate and weight decay is often the wrong fight. In most cases, the size and quality of your training data has far more impact on final accuracy than hyperparameter tuning. "Tweaking hyperparameters but seeing no improvement" is usually a signal to fix your data first. You only see this clearly when you understand how model training actually works.

③ Understanding Attention gives you a principled approach to prompt and RAG design

Attention determines which tokens in the context window the model focuses on. Once you understand this, questions like "why does information buried in the middle of a long context get ignored?", "how should I chunk documents for RAG?", and "why does prompt structure affect output?" become answerable with reasoning, not guesswork.

Reading these books moves you from "it works somehow" to "I know why it works." That shift directly improves the speed and quality of your engineering decisions.

Why Only O'Reilly Books?

Every book in this list is published by O'Reilly. The reason is straightforward.

The authors are practitioners at the frontier. Engineers and researchers from HuggingFace, OpenAI, Anthropic, Google, and NVIDIA are writing these books — not as textbook overviews, but as distillations of knowledge they use on the job. These titles are regularly referenced in communities like Reddit's r/MachineLearning and Hacker News.

The quality of translation into Japanese is also consistently high, but for English-speaking readers, you have direct access to the original — which is often ahead of any translation in terms of updates.

The Books

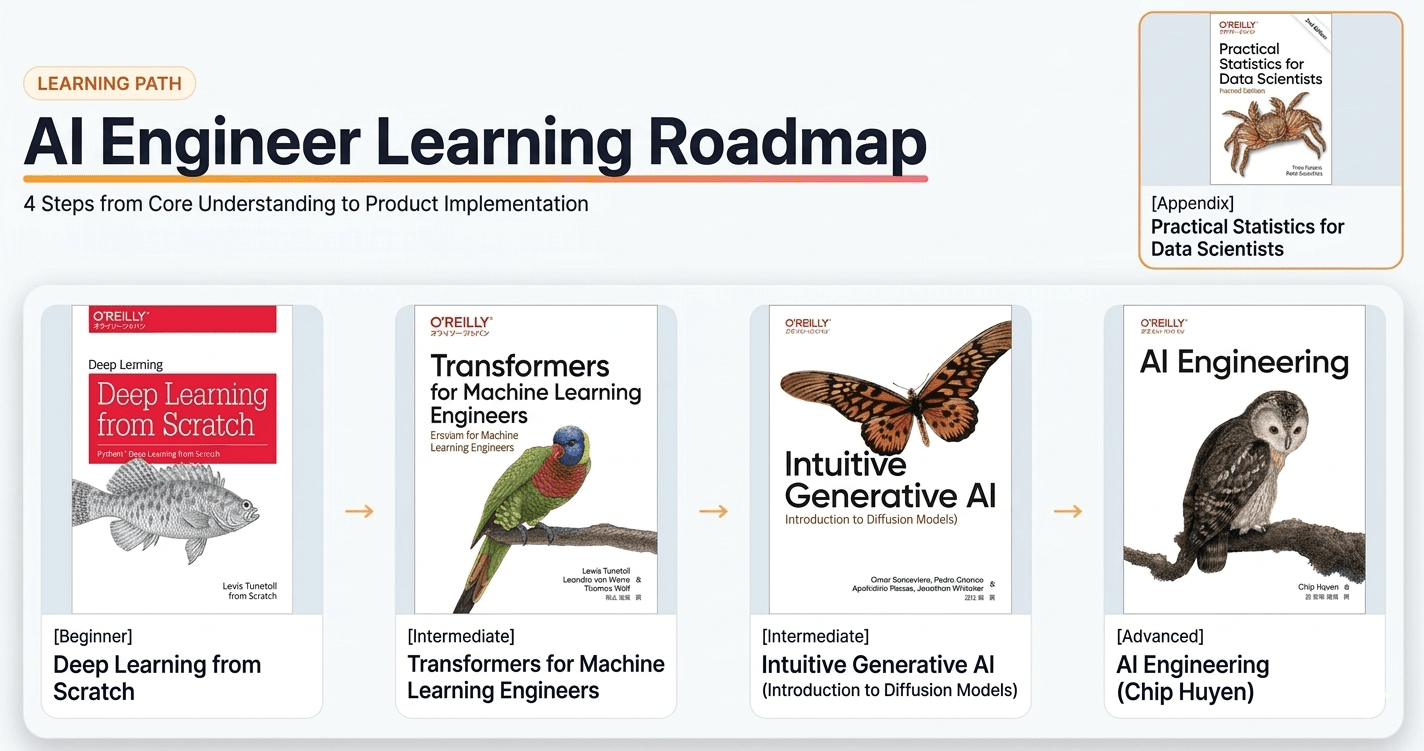

Reading Order (Adjust to Your Level)

The order below is a baseline, not a rule.

[Beginner: Start here if you're new to deep learning]

Deep Learning from the Basics

↓ Build neural nets by hand to understand how they work

※ Skip if you already understand backprop and gradient descent

[Intermediate: Architecture — the fastest path for most engineers]

Natural Language Processing with Transformers

↓ Transformer internals + real implementation with Hugging Face

[Intermediate: Generative AI — go here first if images/audio are your focus]

Hands-On Generative AI

↓ Transformers + Diffusion models, applied across modalities

[Advanced: Productionization — for engineers who want to evaluate, improve, and ship]

AI Engineering (Chip Huyen)

↓ Evaluation, RAG, agents, inference optimization

[Read in parallel: For those who want to understand math and metrics rigorously]

Practical Statistics for Data Scientists

→ Statistical grounding for loss functions, evaluation metrics, A/B tests

1. Deep Learning from the Basics — Start Here

Author: Koki Saito

Publisher: Packt Publishing

Audience: Engineers with basic Python who want to understand deep learning from the ground up

Amazon →

All sample code is publicly available on GitHub. Clone it and run it alongside the book — this is meant to be read with your hands on a keyboard.

This book teaches deep learning by implementing everything from scratch — no frameworks. Backpropagation, gradient descent, CNNs — you build them all in plain Python. The result is that the neural networks you previously treated as black boxes become things you actually understand.

Why it matters in practice: Debugging issues like loss not converging or overfitting is dramatically faster when you understand what's happening underneath. This book is the foundation for everything else on this list.

Who it's for:

- You've used PyTorch or TensorFlow but don't know what's actually happening internally

- You have a vague sense that you're "just using" neural nets without real understanding

- Mathematical notation in papers or docs makes you skip ahead

2. Natural Language Processing with Transformers — Build Real Implementation Skills with Hugging Face

Authors: Lewis Tunstall, Leandro von Werra, Thomas Wolf (Hugging Face engineers)

Foreword: Aurélien Géron

Publisher: O'Reilly

Audience: Engineers with Python and PyTorch basics, some GPU training experience

Amazon →

Official code repository with notebooks for each chapter, runnable directly in Google Colab or a local GPU environment. Reading alongside working code changes the pace of understanding significantly.

The three authors are Hugging Face engineers — the people who actually built the Transformers library. The book progresses through real use cases: text classification, named entity recognition, text generation, summarization, question answering. It's not theory-first; it's use-case-first with working code throughout.

Why it matters in practice: This book is what enabled me to actually implement fine-tuning with Hugging Face. Understanding the model architecture means you can identify the root cause of errors and make informed decisions about what to change. The coverage of distillation, quantization, and pruning also directly applies to inference optimization.

What you'll learn:

- Transformer architecture (Attention, encoder-decoder structure)

- Hugging Face ecosystem (Datasets, Trainer, Pipeline)

- Fine-tuning implementation

- Model compression: distillation, quantization, pruning

- Techniques for improving accuracy with limited labeled data

# The kind of implementation this book teaches (text classification fine-tuning)

from transformers import AutoTokenizer, AutoModelForSequenceClassification, Trainer, TrainingArguments

model_name = "bert-base-uncased"

tokenizer = AutoTokenizer.from_pretrained(model_name)

model = AutoModelForSequenceClassification.from_pretrained(model_name, num_labels=2)

training_args = TrainingArguments(

output_dir="./results",

num_train_epochs=3,

per_device_train_batch_size=16,

evaluation_strategy="epoch",

)

trainer = Trainer(

model=model,

args=training_args,

train_dataset=train_dataset,

eval_dataset=eval_dataset,

)

trainer.train()

Who it's for:

- You've used HuggingFace Pipelines but don't know how to customize beyond them

- You want to implement fine-tuning but don't know where to start

- You want the same foundational knowledge that working ML engineers in the West have

3. Hands-On Generative AI — Understand Audio and Image Generation by Building It

Authors: Omar Sanseviero, Pedro Cuenca, Apolinário Passos, Jonathan Whitaker (Hugging Face engineers and researchers)

Publisher: O'Reilly

Audience: Engineers with Python and ML basics who want hands-on generative AI experience

Amazon →

Official repository with notebooks for each chapter. Run image and audio generation code alongside the text.

All four authors work at Hugging Face. The book covers both Transformer-based and Diffusion-based generative AI through hands-on implementation. The focus is on using pretrained models to solve real problems — not on abstract explanation.

Why it matters in practice: I read this to understand the mechanics of audio and image generation. Knowing how diffusion models remove noise to produce output gives you a principled basis for parameter tuning and model selection. It's the shift from "I'll try different settings and see" to "I understand why this model fits this use case."

What you'll learn:

- How diffusion models work (noise addition and iterative denoising)

- Text-to-image and audio generation pipelines

- Fine-tuning pretrained generative models

- Running large models under hardware constraints

- Multimodal AI applications

Who it's for:

- You use Stable Diffusion or Whisper but don't understand what's happening inside

- You're building AI products involving audio or image generation

- You want to understand Diffusion alongside Transformers to broaden your model selection judgment

4. AI Engineering — The Complete Picture for Building AI Products on Foundation Models

Author: Chip Huyen (former Stanford lecturer, widely respected ML author)

Publisher: O'Reilly

Audience: Engineers who can build with APIs but want to systematize evaluation, improvement, and production

Amazon →

Maintained by Chip Huyen directly. Code, resources, and post-publication updates are added here — worth watching alongside the book.

Chip Huyen's previous book, Designing ML Systems, has been a long-running reference in the ML engineering community. For this book, she interviewed over 100 practitioners from OpenAI, Google, Anthropic, NVIDIA, Meta, Hugging Face, LangChain, and LlamaIndex. The depth of real-world knowledge here is unlike any surface-level summary.

What you'll learn:

- How to decide whether to build a given AI application at all

- Hallucination: root causes and mitigation strategies

- Prompt engineering best practices

- RAG design principles

- How to build and evaluate agents

- When to fine-tune (and when not to)

- Inference cost optimization

- Feedback loop design for continuous improvement

For engineers who can use APIs but want to think seriously about accuracy, cost, and production — read the first three books on this list, then come to this one.

+α. Practical Statistics for Data Scientists — The Mathematical Foundation for Evaluating AI

Authors: Peter Bruce, Andrew Bruce, Peter Gedeck

Publisher: O'Reilly

Audience: Engineers who've jumped into deep learning but have gaps in statistical foundations

Amazon →

Statistical concepts appear constantly in ML papers and codebases — loss functions, evaluation metrics, A/B tests, confidence intervals. This book is for engineers who want to understand these properly rather than skim past them. R and Python examples are included throughout, making it accessible for engineers.

Why it fills a real gap: If you learned deep learning first, you can end up with shaky foundations around evaluation — "why this metric?", "is this improvement actually meaningful?" This book builds the statistical intuition that makes model improvement decisions more rigorous.

Who it's for:

- You can't confidently explain what F1 score or AUC-ROC actually mean

- You're not sure how to correctly interpret A/B test results

- The evaluation sections of papers don't quite click

Learning Roadmap for AI Engineers

| Step | Book | Purpose |

|---|---|---|

| Step 1 | Deep Learning from the Basics | Understand neural net internals through implementation |

| Step 2 | NLP with Transformers | Fine-tuning implementation with Hugging Face |

| Step 3 | Hands-On Generative AI | Extend into Diffusion and generative AI broadly |

| Step 4 | AI Engineering | Systematize evaluation, improvement, and production |

| Parallel | Practical Statistics for Data Scientists | Statistical grounding for evaluation and decisions |

Conclusion

Shipping something with AI and actually improving it over time are different skills. The second requires understanding what's inside the model, knowing what evaluation results mean, and being able to form hypotheses about what to try next.

Every book on this list was written by practitioners — people at HuggingFace, OpenAI, Anthropic — and is built around knowledge that applies on the job, not in a classroom.

If you're unsure where to start, begin with the Transformers book. If you've already worked with models and touched HuggingFace, this one book will take your understanding up a clear level.

FAQ

Q: Do I need a strong math background?

For Deep Learning from Scratch, high school math (basic calculus and linear algebra) is enough. The Transformers and Generative AI books do include math, but the structure pairs each concept with code, so it's followable. If you want to understand the math deeply, read Practical Statistics in parallel.

Q: PyTorch or TensorFlow?

Most books here lean PyTorch. The Transformers book supports both, but PyTorch is the current industry standard — worth becoming comfortable with it.

Q: Isn't Deep Learning from Scratch outdated?

The first edition is from 2016, but the fundamentals — backpropagation, gradient descent, CNNs — haven't changed. These are the same building blocks you need to understand Transformers and LLMs. You'll still need other books for the latest architectures, but this foundation is timeless.

Q: What's a tokenizer?

A tokenizer converts raw text into tokens — the units a language model actually processes. The same word can map to different numbers of tokens depending on the model, and this affects cost, context length, and model behavior in ways that aren't obvious until you know about it.

Q: What's RAG?

Retrieval-Augmented Generation. A technique where an LLM retrieves relevant information from an external knowledge base at inference time and uses it to generate a response. Effective for reducing hallucinations and keeping responses grounded in specific domain knowledge.

Q: What's hallucination?

When an LLM generates factually incorrect information with apparent confidence. It's one of the primary reliability challenges in AI products, and addressing it — through RAG, prompt design, evaluation pipelines, and fine-tuning — is a core skill in AI engineering.

This post contains affiliate links.

Contact

For project inquiries and collaboration, contact us here.

If you are considering a new project, product development, or other collaboration, please get in touch.

Related Articles

Explore more articles connected to this topic.

We Released the Highest-Accuracy Japanese ASR Model for Free

Fine-tuned Qwen3-ASR-1.7B for proper noun recognition. Free on Hugging Face. Outperforms Whisper on both CER and proper noun F1. Also available in Sonophie for macOS.

Read article →Speaker Diarization Metrics: DER, JER, Purity & Boundary Error with Python

DER alone won't tell you why your diarization fails. Learn DER, JER, Purity, Coverage, and Boundary Error — with formulas and working Python code using pyannote.

Read article →